The New Yorker dropped a major investigation into Sam Altman yesterday. Later in the day, Anthropic announced it had overtaken OpenAI in revenue. I don't think the timing was an accident.

Anthropic has a habit of making announcements on bad days for competitors. Whether that's coordinated or opportunistic, the effect is the same.

The piece, by Ronan Farrow and Andrew Marantz, draws on over 100 interviews and internal memos from people inside OpenAI, including Ilya Sutskever and Dario Amodei. The portrait is of a CEO whose own colleagues questioned whether he could be trusted not just with the company, but with the technology. If you've been following the OpenAI story, very little of it will surprise you. If you haven't, read it.

Meanwhile, vibes are considerably better at Anthropic which has been “having a moment” every day for the past three months. $30 billion in annualized revenue, up from $9 billion at the end of last year. Over 1,000 enterprise customers each spending more than a million dollars annually, a count that doubled in under two months.

I had two conversations this week that made these numbers feel less abstract. Yesterday, a business owner asked me what the actual difference is between Claude and ChatGPT, and whether switching was worth the hassle. This morning, nearly the same conversation with an executive team. I get this question constantly. This week feels like the right time to answer it properly.

Two Labs, Two Bets

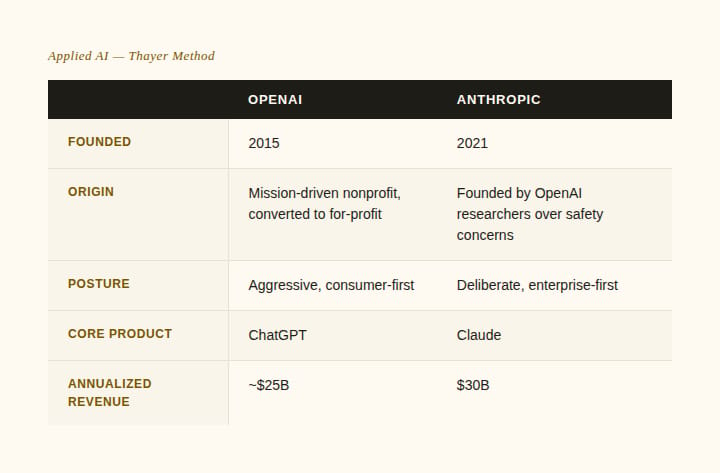

OpenAI was first. ChatGPT launched in late 2022 and became the fastest consumer product to 100 million users in history. Anthropic was founded in 2021 by Dario Amodei and a group of researchers who left OpenAI over disagreements about how quickly and carefully to develop the technology.

Anthropic moves more deliberately. Its emphasis on safety and responsible deployment shows up in how the product behaves and how the company communicates. That posture matters specifically to enterprise buyers making seven-figure annual commitments. Procurement teams and legal departments ask different questions than individual users do.

OpenAI built ChatGPT for everyone. Anthropic built Claude for businesses. Both companies have since converged toward each other, but the strategic starting point was different, and the revenue reflects it.

Worth knowing before we go further: both companies are spending far more on compute than they're collecting in revenue. Your usage is subsidized at both labs. I wrote about this in The Free Ride last week. The subsidy level shifts constantly, and so does which model is technically ahead. The pendulum swings.

The Products

ChatGPT is OpenAI's consumer interface. Claude is Anthropic's. Both have free tiers and paid subscriptions at similar price points. Both have mobile and desktop apps. Both have projects — persistent workspaces where you can load documents, instructions, and context that carry across every conversation. ChatGPT has a more mature integration ecosystem out of the box — Google Drive, Gmail, Outlook, GitHub. Claude's connector library is still catching up.

The Models

Both labs publish multiple models at different capability and cost levels, and both update them constantly.

Both model families get updated frequently, and the technical lead shifts. The model names matter less than how you configure whichever model you're using. A model properly set up with your context will outperform a technically superior model you're running cold.

How They Make Money

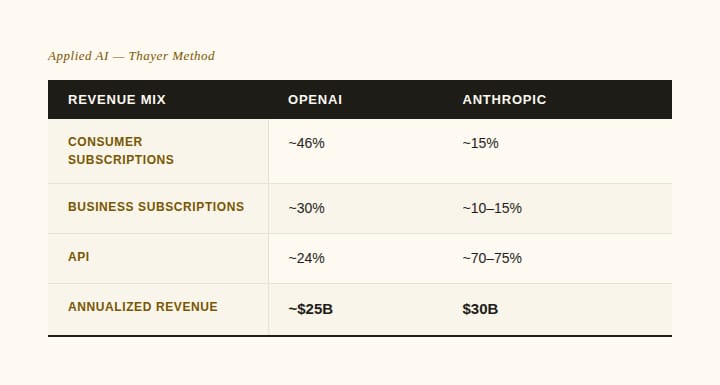

The majority of Anthropic's revenue doesn't come from people using Claude. It comes through the API — software companies building products that run on Claude under the hood. When an app drafts your emails, summarizes a document, or handles customer questions, Claude may be doing the work without the interface ever mentioning it. Every interaction generates token usage. OpenAI operates similarly, but the revenue mix looks very different.

Anthropic has roughly 5% of ChatGPT's consumer user base and is now generating more total revenue. That's the enterprise bet summarized in a single row. Claude has far fewer direct users. It has far more total compute running inside other products — and that's where the money is.

The Switching Question

The hesitation I hear most often isn't about features or price. It's about context. After months with one model, it feels like it knows you. The other one doesn't yet. That's not a model quality problem — it's a missing context problem.

What you've built up isn't model knowledge. It's configuration: conversation history, preferences, the way you prompt. That lives in your chat history and system prompt, not in the model itself. You can move it.

The AI will help you switch if you ask it to. Export your memory and chat history from ChatGPT. Paste it into a new Claude Project. Ask Claude to write a system prompt based on what it finds. Within a few conversations, you'll have rebuilt most of what felt sticky. The same process works in the other direction — some developers have moved from Claude to ChatGPT recently as Anthropic has tightened usage limits on certain third-party platforms. Switching costs run both ways.

The honest answer to which you should use: configure both properly and try each for a real week of work, not a ten-minute test. The pendulum on which is technically ahead will keep swinging. Your configuration is the durable edge — and you can take it with you.

The Assignment

Pick something you do regularly: drafting a memo, summarizing a document, thinking through a decision. Do it in your usual model. Then do the same task in the competitor. Same prompt, same inputs. See what comes back. A switching decision made after a real test is worth more than one made on reputation alone.

If the test convinces you to switch, the instructions are above. If it doesn't, you've confirmed your choice with firsthand data.

Quick Hits

Meta's token leaderboard. Meta employees are competing on an internal dashboard called Claudeonomics to see who can consume the most AI tokens. Top titles include "Token Legend" and "Session Immortal." Over a recent 30-day period, the top 250 users consumed over 60 trillion tokens combined. The top individual hit 281 billion in a month. Measuring token consumption and calling it productivity is the same mistake as measuring lines of code written. Input isn't output.

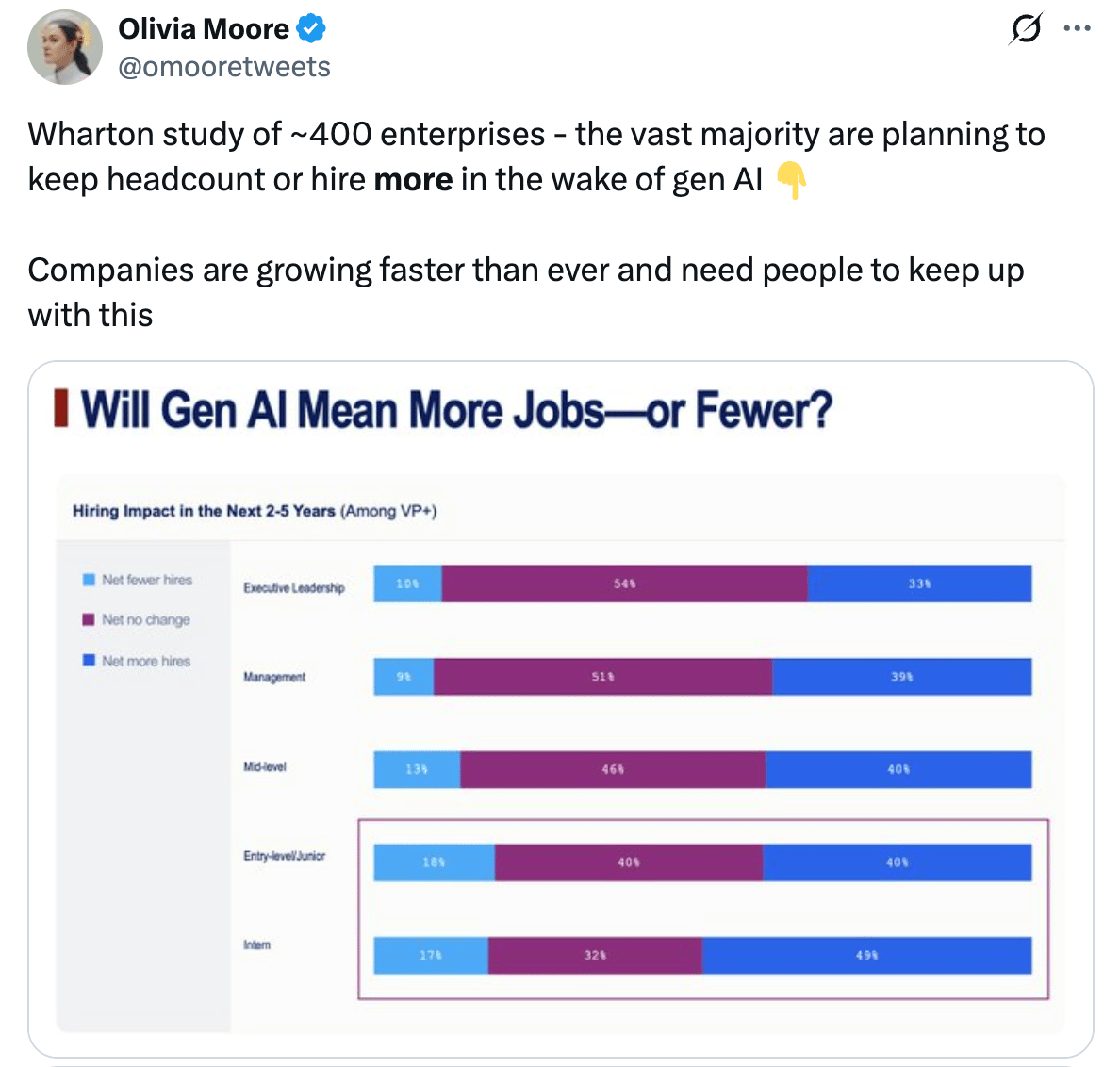

More jobs, not fewer. A Wharton study of roughly 400 enterprises found the vast majority plan to maintain or grow headcount in the wake of generative AI. Entry-level and intern roles face the most pressure. Senior roles are net positive. MIT's CSAIL published research this week reinforcing the point: AI is advancing through the labor market more like a rising tide than a crashing wave — broad and gradual, not concentrated disruption in specific roles. If you're reading this newsletter, the data is probably in your favor. But only if you're building the skills.